Visuospatial Learning Capabilities of LLMs

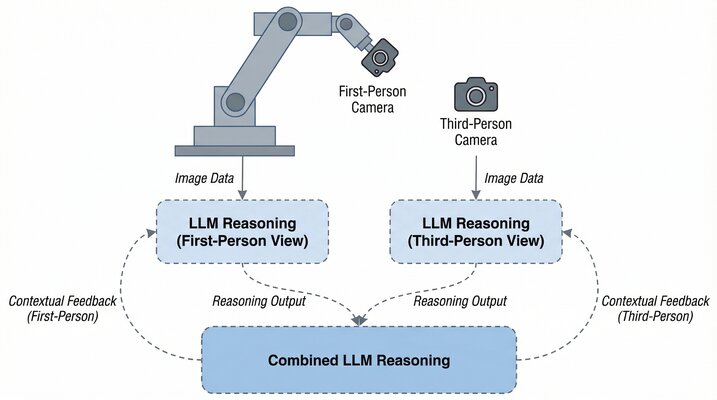

This project investigates LLM spatial reasoning using a robot arm equipped with dual cameras — first-person (on the arm) and third-person (external) views. By comparing visual perspectives, the research probes how language models perceive and reason about 3D space.